February 7, 2026

Essai, The Language Exam Copilot

A side quest into Vertex AI, Supabase, and the art of forcing a language model to be actually useful. An AI-powered essay grader.

At the moment the project doesn't cover an entire language exam, just the writing section.

The Problem

A few months ago I was preparing for the TestDaF, a German language proficiency exam. If you have never had the pleasure, these exams all follow the same recipe: reading, listening, speaking, and writing. The writing section asks you to produce a structured essay on a given topic, and it gets graded on criteria like task achievement, coherence, vocabulary range, and grammatical accuracy.

The thing about practicing the writing section: you can write as many essays as you want, but without feedback, you are just guessing. If you are studying seriously and writing two or three essays a week it can be expensive to pay a human grader. Self-evaluation isn't an option eihter, even with the rubrics at hand, there are questions I don't know how to answer (e.g. "How is the student's control over grammar and punctuation?"). That's the point of me taking the exam, to have an idea of how well I'm doing with this language.

At some point I realized this problem was shaped exactly like the kind of thing language models are good at: structured evaluation against well-defined rubrics. Every major language exam publishes its scoring criteria. The rubrics are explicit, detailed, and consistent. A model that can follow instructions should be able to apply them.

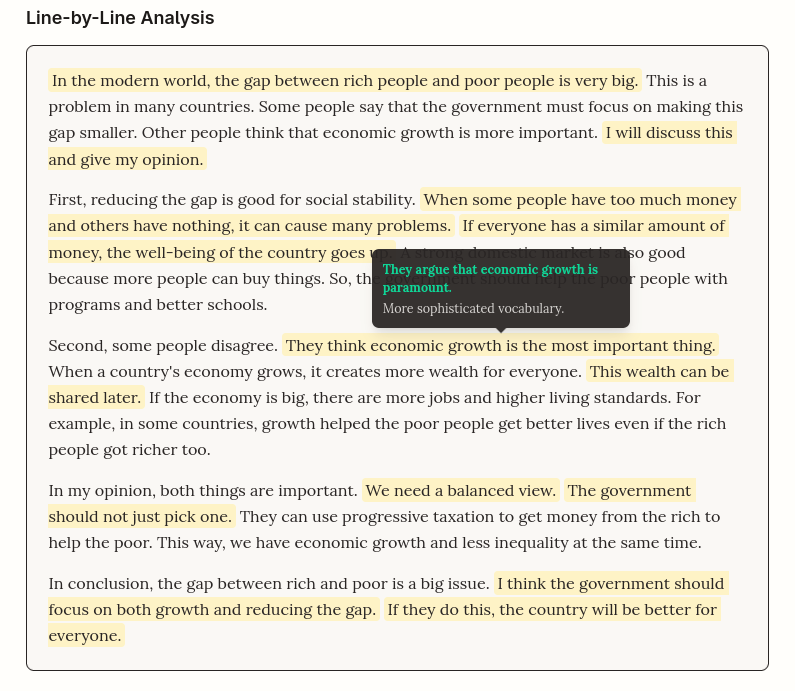

That's how this thing ("Essai") started. The idea is straightforward: you write/paste your essay, hit "Grade," and get back an overall score, per-criterion feedback, and (more interesting) sentence-level suggestions. I started with TOEFL since the English rubrics are the most widely available and easiest to validate, but the plan is to expand to other exams and languages, including the TestDaF that got me into this rabbit hole.

How It Works

The core idea is simple. You give the model an essay and a clear rubric, and it gives you structured feedback. But "simple" and "straightforward to implement" are different things, as usual.

Two (Parallel) Calls

Each evaluation triggers two separate calls to Gemini 2.0 Flash via Vertex AI, running in parallel two tasks: (1) it produces the overall band score and criterion-level feedback; (2) and walks through the essay sentence by sentence, offering improvements with explanations.

Why parallel? Although I might try different configurations in the future, for now, the scorer does not need to know about sentence-level suggestions, and the suggestion engine does not care about the final score. Maybe if they were set up sequentially, the general output could be then better understood given that the line-by-line analysis request is considering it in the prompt. But running them concurrently cuts the latency in half. And the two approaches produce good and consistent results from having access to the same rubrics.

Structured Output via Tool Declarations

This was the part that convinced me to use Vertex AI over OpenAI for this project. Vertex AI's function calling lets you define a JSON schema as a "tool," and the model is forced to return data matching that exact shape. No post-processing, no regex, no "please respond in JSON" prompt hacks.

export const gradeEssayTool = {

functionDeclarations: [{

name: 'gradeEssay',

parameters: {

type: 'OBJECT',

properties: {

overall_band_score: {

type: 'NUMBER',

description: 'A single estimated integer band score from 4 to 9.',

},

criteria_feedback: {

type: 'ARRAY',

items: {

type: 'OBJECT',

properties: {

criterion: { type: 'STRING' },

band_score: { type: 'NUMBER' },

positive_points: { type: 'STRING' },

improvement_points: { type: 'STRING' },

},

},

},

},

},

}],

};

The model receives this schema and returns valid, parseable JSON every time. On the client side, I validate everything again with Zod before rendering, but the tool declaration approach means I have never seen a malformed response in production. That is a big deal when you are building something that needs to feel reliable to a stressed-out exam student.

The Prompt

The evaluation prompt gives the model a persona (an expert writing coach) and enforces one golden rule: every piece of feedback must include a specific quote from the student's essay. This prevents the generic "your vocabulary could be better" feedback that makes most AI grading tools useless. If the model cannot point to a concrete sentence in your text, it should not be saying it.

The Stack

Why Vertex AI

The structured output via tool declarations I described above was the main reason. It just works, and I did not want to spend time wrestling with prompt hacks to get JSON out of a model. On top of that, Gemini 2.0 Flash is significantly cheaper than GPT-4o for this kind of structured grading task, and Flash Lite (which I use for question generation) costs almost nothing. The GCP ecosystem also meant I could set up Workload Identity Federation instead of storing API keys, which I'll get into below.

Supabase for Auth + Data

I went with Supabase because it gave me authentication and a database without having to wire up two separate services. OAuth with Google and GitHub works out of the box with their Auth UI React component, no custom backend code needed.

Also I can use Row Level Security. The policies live in the migration file, not in application code:

create policy "Users can read their own evaluations"

on public.evaluations for select

using (auth.uid() = user_id);

create policy "Anyone can view shared evaluations"

on public.evaluations for select

using (share_token is not null);

There is no way to accidentally bypass access control with a buggy API route. The database itself enforces that you can only see your own evaluations.

Workload Identity Federation

This was the most interesting infrastructure problem in the whole project, and probably the most under-documented pattern I have encountered.

The situation: Vercel serverless functions need to call Vertex AI. The standard approach is to store a GCP service account key as an environment variable. But a leaked key gives full access to your GCP project, which is not great.

Workload Identity Federation lets Vercel prove its identity to GCP without any stored secrets. The flow:

- Vercel issues an OIDC token for each serverless function invocation.

- That token gets exchanged with GCP's Security Token Service for a short-lived access token.

- The access token impersonates a GCP service account with only the permissions it needs (Vertex AI User).

export const vertexClient = new VertexAI({

project: process.env.GCP_PROJECT_ID!,

location: 'europe-central2',

googleAuthOptions: {

credentials: {

type: 'external_account',

subject_token_type: 'urn:ietf:params:oauth:token-type:jwt',

token_url: 'https://sts.googleapis.com/v1/token',

subject_token_supplier: { getSubjectToken: getVercelOidcToken },

},

}

});

The subject_token_supplier callback is the key piece. Vercel's SDK provides getVercelOidcToken(), which returns the OIDC token for the current invocation. No keys, no secrets, no rotation headaches. I spent a fair amount of time piecing this together from scattered documentation and GitHub issues. If you are trying to connect Vercel to any GCP service, this is the pattern.

Beyond the Grading

Once the core evaluation was working, I kept going (as one does).

I added shareable results early on because I wanted to send my scores to a friend who was also studying. Each evaluation can get a share token, which generates a public link at /share/[token] that anyone can view without logging in. You can toggle it on and off from the detail page. Simple, but it makes the whole thing feel like something you would actually use.

Then came progress tracking, which was the "just one more feature" that took longer than expected. It is a pure SVG chart, no chart library, that shows score trends over time across all five dimensions. I wanted users to have a reason to come back and track their improvement, and I wanted to avoid pulling in a dependency for what is essentially a few lines and circles.

There is also a question generator that uses Gemini 2.0 Flash Lite to produce practice prompts, so you do not have to go find one somewhere else before you can start writing. And rate limiting: five evaluations per day per user, twenty generated questions per day per IP. The evaluation count is just a Supabase query on the existing evaluations table, no extra infrastructure needed.

What's Next

I built this mostly for myself and as a demo project. It is not really designed with scale in mind, and I might end up restricting access at some point or just keeping it as a personal tool. That said, the architecture already supports swapping rubrics and prompt templates per exam type, so extending it to TestDaF, IELTS, DELF, DELE, or whatever else follows the same essay-in, feedback-out pattern should not require rethinking anything fundamental. The model does not care what language the essay is in, as long as the evaluation criteria are clear.

I thought it was a cool project, and I think there is room to keep working on it. If someone else happens to think so too, contributions are welcome.

Back to the main quest next.